As a digital marketer, you’re likely always on the lookout for new methods and strategies to improve your website’s performance and user experience. One such technique is multivariate testing, a powerful approach that helps you optimize various aspects of your website simultaneously. In this article, we’ll explore the multivariate testing basics, providing an overview of this valuable digital marketing strategy and showing you how it can become an integral part of your website optimization techniques.

Key Takeaways

- Learn the fundamentals of multivariate testing and how it can enhance your digital marketing efforts.

- Understand the importance of multivariate testing in optimizing user experiences and conversion rates.

- Discover the differences between multivariate testing and other common testing methods, such as A/B testing.

- Gain insight into the multivariate testing process, from identifying variables to creating variations and analyzing results.

- Brush up on best practices for executing successful and effective multi-variate tests.

Disclaimer: Please note that we use button colors, fonts, and headlines as a way to clarify the concept of online testing. Successful AB and multivariate tests will include more sophisticated changes to your page.

What is Multivariate Testing?

Multivariate Testing, or MVT testing, is a testing method where multiple variations of multiple elements on a webpage are combined to determine the best combination of elements on the page to increase conversions.

It’s a key testing method in conversion rate optimization.

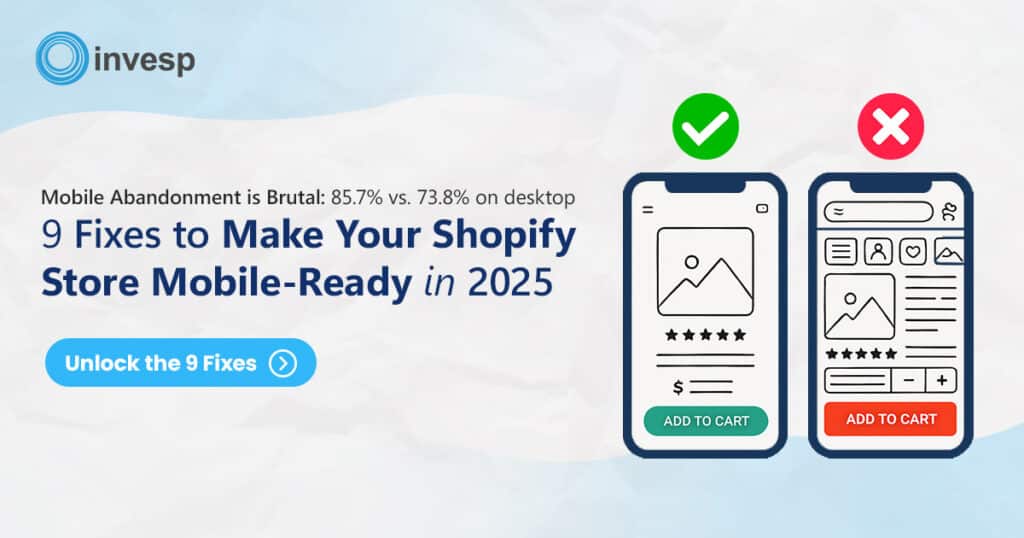

By using a multivariate testing tool, you can test different variations of any element on your page (headlines, images, buttons, etc.) to measure their impact on your conversion rates. The following image displays an example of how MVT testing software works.

In this example, the online testing software tests different variations of the page headline, image, and the “call to action” button:

- The original headline is tested against three other possible headlines for a total of four possible headlines on the page.

- The original image is tested against two other possible photos for a total of three possible pictures on the page.

- Three different buttons are tested against the original button on the page, for a total of four possible buttons on the page.

As a visitor arrives at a page, the multivariate testing tool picks a particular element (one of the four headlines, one of the three images, and one of the four buttons to display.)

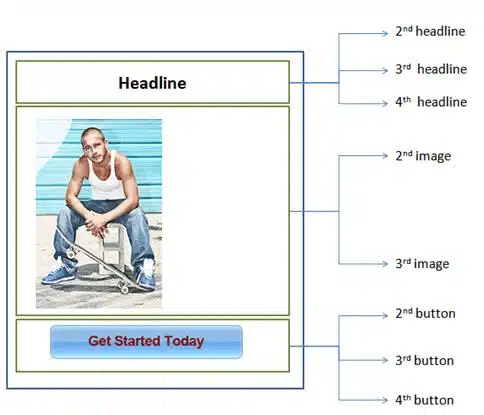

Your team does not have to create all of the 48 designs; the multivariate testing software will swap the different variations of the landing page, create the designs automatically, and create all 48 possible variations. The following image shows four of the 48 possible designs the testing software can generate.

The total number of testing variations (also called challengers) depends on the number of elements you will test on a page (headline, image, buttons, etc.) and the number of variations you will test for each of these elements.

You can calculate the total number of challengers in a multivariate test by multiplying the number of different variations of each of the elements.

For a webpage in which we will be testing (N) number of elements, we calculate:

Total number of page variations = Number of variations of 1st element x Number of variations of the 2nd element x Number of variations of the 3rd element x …x Number of variations of the Nth element

The number of page variations can grow very fast. Some testing software allows you to tens of thousands (sometimes millions) of variations of a single page.

Multivariate Test Examples

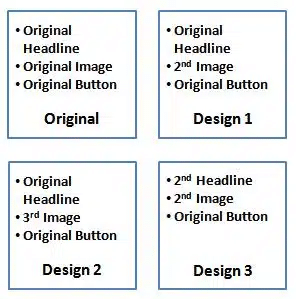

Let’s take the product page from Jambys as an example:

On this page, you can test different:

- Variations of headlines.

- CTA texts.

- Variations of offers.

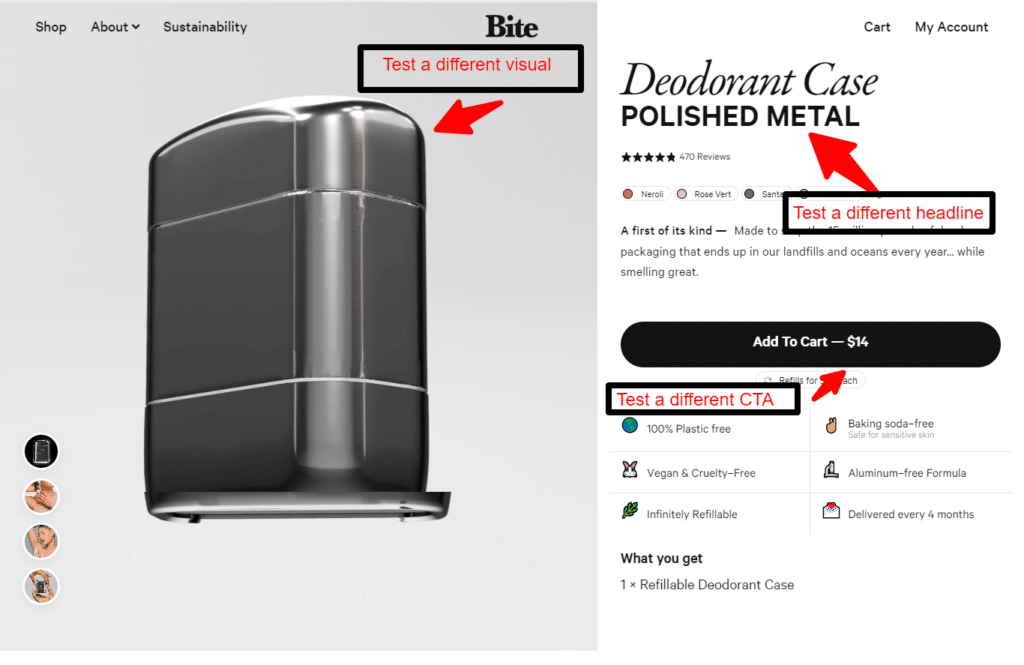

Let’s take an example from Bite:

On this page, you can test:

- different variations of the headline

- displaying a different visual

- CTA text

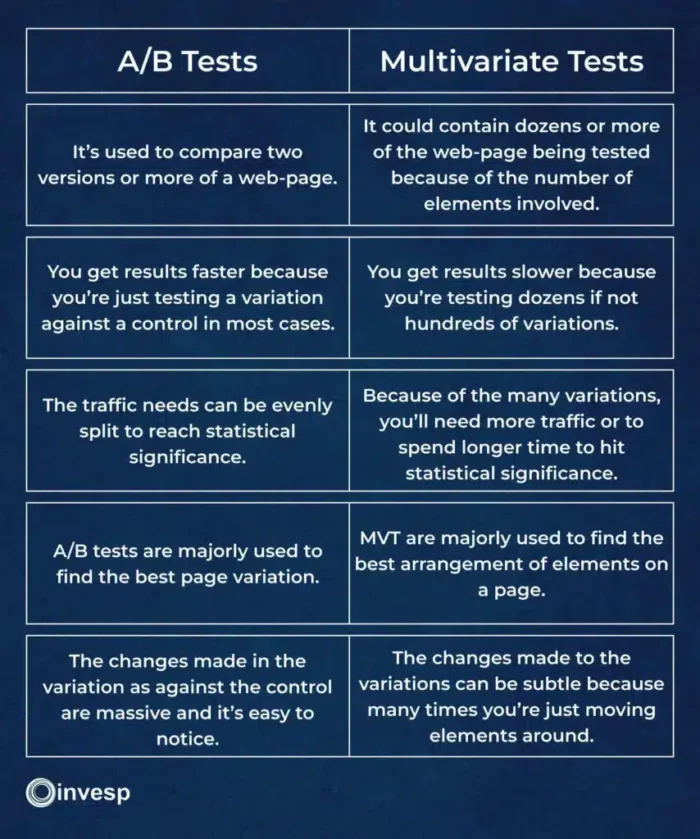

A/B Tests vs. Multivariate Tests

Suppose you’re just getting started with running online tests; you’d probably ask yourself, what is multivariate testing, and how does it differ from other testing methods, such as A/B testing?

Let’s dive in and explore the importance of this approach in the digital marketing realm.

While A/B testing involves comparing two versions of a web page or app to determine which one performs better, multivariate testing goes a step further. It compares multiple combinations of elements on a single page, allowing marketers to analyze the interactions between these elements and understand their combined effects on key performance indicators (KPIs), such as conversion rates, click-through rates, and engagement levels.

RELATED ARTICLE: A/B Testing Vs Multivariate Testing: When To Use Multivariate Testing Or A/B Split Testing

This method often proves more efficient than A/B testing, as it enables the testing of numerous variables simultaneously. Consequently, multivariate testing can reveal subtle yet significant differences in the performance of various element combinations – insights unachievable through simple A/B comparisons.

The importance of multivariate testing lies in its ability to provide actionable insights into the relationships between various webpage elements, which are crucial for developing highly effective marketing strategies. By employing this data-driven approach, marketers can make strategic decisions backed by solid evidence rather than relying solely on intuition or experience.

How to create a successful multivariate test.

Multivariate testing software allows marketers to create and start simple tests of landing pages or web pages in a few hours.

But that is the easy part.

Many companies ultimately fail when designing successful test scenarios, assessing results, and creating meaningful follow-up tests.

Poorly designed experiments can take years to conclude. Even worse, they might not provide accurate insights into what elements convert more visitors into customers, weakening the learning curve.

Imagine a case where you plan to test different headlines on a page. You start by coming up with ten different possible variations to the headlines. Which of these ten possible headlines should you test against your original headline? Why not test all of them? Why not test variations of images, buttons, and layouts?

You will likely find yourself relying on guesswork to determine which versions to include in the test. The same logic, of course, applies to all elements you want to test on a web page.

Without being judicious with test scenarios, you might attempt to test millions of combinations.

Testing is an essential component of any conversion rate optimization project. However, it should not be the only component. Testing should only occur after the conclusion of other equally critical optimization stages, such as persona development, the voice of customer research (including polls and surveys), heuristic evaluation, usability testing, site analysis, and design and copy creation. Each element provides a building block for a highly optimized website that converts visitors into customers.

To create a successful test, you must go through the following steps:

- Evaluate the page, looking for possible problems in it

- Prioritize the issues identified on the page in terms of their impact on your conversion rate

- Create a hypothesis of how to fix some of the top issues on the page and how your fix will affect your conversion rate.

- Assert the validity of your hypothesis through multivariate or AB testing.

- Analyze the test results to determine the test hypothesis‘s correctness.

- Create a new test based on the test result.

The results from running multivariate testing

While MVT testing is powerful in helping online businesses increase conversion rates, the results you will achieve from running a single test may vary.

You can choose different approaches to design and create your multivariate test:

1. Element level testing:

In this type of testing, you test different variations of an element on the page. For example, you test different headline variations or several images. The goal of an “element level test” is to measure that element’s impact on your conversion rate.

Element-level testing is considered the easiest type of testing. It requires the least amount of effort. In most cases, element-level testing has minimum impact on your website conversion rates.

2. Page-level testing:

In this type of testing, you test multiple page elements simultaneously. For example, you can test different landing page layouts and/or a different combination of elements and so on. Page-level testing requires more effort from the development team to implement and generates a higher impact on your conversion rates than element-level testing.

Carefully designed page-level testing can produce anywhere from 10% to 20% increase in conversion rates.

3. Visitor flow testing:

In this type of testing, you test several navigation paths for visitors within your website. For example, an e-commerce website might test single-step vs. multi-step checkout. Another example is to test different ways visitors can navigate from category pages to product pages.

Visitor flow testing can get complicated quickly. It typically requires more effort from your development team to implement. Done correctly, this type of testing will have a higher impact on your conversion rates than page-level testing.

Full Factorial or Fractional Factorial MVT. Which is best?

When people talk about multivariate testing, they usually refer to full factorial testing. In this type of multivariate test, the traffic is distributed equally among every variation.

Let’s say, for instance, you’ve got 10 variations based on the number of variables you’re testing, and the page has 1000 unique visitors. When you do your math, an equal distribution of traffic here means every variation gets 100 visitors.

Now, because each variation gets the same amount of traffic, this test type is best for determining which particular variation performed best.

Much more than finding out the winning variation, it allows you to single out the element in the variation that had the most impact in improving the conversion rate.

It’s important you know that in a winning variation, not all elements perform equally. The position of the testimonials might have had the most impact on the winning variation, while the headline pulled no weight.

Partial or Fractional Factorial Multivariate Testing.

The name gives it away. Unlike the full factorial testing that requires all of the variables to get traffic to drive results, with fractional factorial, only a subset of the variations gets the traffic.

The other variations don’t get traffic, while their conversion rates are inferred from the ones that got the traffic.

This mvt testing type requires some hard maths for conversion rate inference and assumptions for the variation that didn’t get traffic.

Professional tip: I’ll advise you to always go with the full-factorial multivariate test. This provides you with data that is better than inferences.

Do’s and Don’ts Of Multivariate Testing

Do’s:

1. Decide which sections should be included in the test:

Not every element has the same impact on the conversion rate.

Suppose you included a headline, a testimonial section, and a footer; you might come to realize that the footer section has little to no impact on the conversion rate of that page or user engagement.

It’s an important factor in the conversion impact of elements and sections.

2. Preview every combination:

This is a mistake even mature experimenters make at times. They forget to preview the product of the element variations.

This is important because you don’t want to have a variation where the header reads 20% off while the call-to-action button reads free samples. Both messages in this variation are incompatible. Previewing helps you detect these errors and remove them.

3. Estimate the traffic for significant results:

Having ten variations for a page that gets a hundred visitors will take much time to achieve statistical significance.

To avoid running tests that the results would be invalid before it’s ready, learn to estimate the amount of traffic that will be required.

Here’s a simple method to use. Use your web analytics to get an idea of the traffic that page gets. Secondly, know how many sections/elements you want to test.

The total number of page variations = Number of variations of 1st element x Number of variations of the 2nd element x Number of variations of the 3rd element x …x Number of variations of the Nth element.

Now, divide the number of traffic by the variations. If the number of traffic you get is small, then a multivariate test might not be a good fit for that page.

Don’ts

1. Don’t include a lot of sections or elements:

The more elements and sections you test, the more variations you get. This is a big deal because when you test many elements that increase the number of variations, you’ll need a lot more traffic to get statistical significance.

The Pros Of Multivariate Testing

1. Ability to test more variations.

2. You better understand the impact of individual elements on conversion rate.

3. Save time because you don’t have to conduct individual A/B tests.

The downsides of multivariate testing

If you are not careful with planning your multivariate tests, you will end up with weak-quality tests that take too long to implement and produce neither results nor insights.

You must always remember that testing (AB or multivariate) is only one component of conversion rate optimization work.

We have seen many companies that entirely relied on testing software without doing an in-depth analysis of what they were testing. Our 2007 article on the case against multivariate testing points out this example:

Let’s do some simple math.

Say you want to test six different elements on a page (headers, benefits list, hero shots, call to action, etc).

For each element, you will choose four different options. This means you will have a total of 4^6 = 4,096 possible scenarios that you will have to test.

As a general rule of thumb [being more aggressive], you will need around 200 conversions per scenario to ensure the data you are collecting is statistically significant. This translates into 4,096 * 200= 819,200 conversions.

If your website converts around 1%, you will need 819,200 * 100=81,920,000 visitors before you start gaining some confidence in your test results.

If testing 4,096 variations sound difficult, imagine how complicated matters will get by adding variation in campaigns, offers, products, and keywords. Yes, running that many test variatins is not unheard of for many larger websites.

Possible Problems When Creating A Multivariate Test.

1. Be aware of creating the test without paying close attention to the hypothesis behind it:

A faulty hypothesis means your test won’t yield good insights that will impact your business’s bottom line.

Also, creating a multivariate test without a hypothesis results in failure.

There are different templates for creating a hypothesis online, but you can use the one below;

Changing (the element being tested) from ___________ to ___________ will increase/decrease (the defined measurement).

2. Be mindful of the number of variables you are testing and their dependency on one another:

You don’t just test any element because they’re available on the page; you need to understand how each element impacts the others.

Also, remember that the more elements you’re testing increases the number of variations. This means you’ll want to test fewer elements if the traffic and conversions that page receives aren’t much.

3. Be aware of the length of time it will take to complete the test.

Before launching any test at all, it’s good practice to calculate how long it’ll take for the test to achieve statistical significance.

It means having a good grasp of certain numbers like;

- sample size

- number of visitors to the page.

Here’s the formula you can use;

Expected experiment duration = sample size/number of visitors to the tested page.

4. Traffic Allocation:

As the possible number of variations goes up, you’ll need more traffic to complete the test on time.

In the case of an A/B test, you could easily split the traffic 50-50 between the control and variation; for a multivariate test, it’s not the same. Because you’ve got more variations to test, it’ll take longer for the test to be completed because the traffic won’t be evenly split; it could be 5% to a variation, 10% to another variation, etc.

Professional tip: Calculate the sample size per variation before running the multivariate test. If the traffic on the page you want to test won’t be good enough to achieve statistical significance, I suggest you go for an A/B test.

5. Complexity in analyzing results:

A/B tests are simpler to understand, especially in analyzing the result. You have a hypothesis driving the A/B test; you can easily deduce why the control won or didn’t.

This is not the case for a multivariate test. A variation has many elements working simultaneously, so analyzing the result requires mental gymnastics because you need to explain how the individual elements interact.

Multivariate Testing FAQ

What is the main difference between multivariate testing and A/B testing?

While A/B testing involves comparing two versions of a single element, multivariate testing examines multiple variables simultaneously to determine the best-performing combination of elements. A/B testing may be more suitable for simpler experiments, while multivariate testing offers deeper insights in more complex scenarios.

How can I choose which variables to test in a multivariate experiment?

Focus on elements with the greatest potential impact on user behavior or conversion rates, such as headlines, images, and call-to-action buttons. Analyze your website’s analytics to identify areas with room for improvement or elements generating the most engagement.

Do I need specialized software or platforms to set up a multivariate test?

Yes, there are various tools available for conducting multivariate tests, such as Google Optimize, Optimizely, and Visual Website Optimizer. These tools help create a controlled test environment, allocate traffic, and gather accurate data, streamlining the testing process.

How do I determine if my multivariate test results are statistically significant?

Statistical significance indicates the likelihood that the observed test results are not due to chance. Most multivariate testing tools calculate statistical significance automatically. A general guideline is to aim for a confidence level of at least 95%.

What are some best practices for effective multivariate testing?

Best practices include selecting the right metrics to evaluate, understanding sample size and test duration, avoiding common pitfalls, and ensuring test validity. Analyze your website analytics, choose variables and variations strategically, ensure proper test environment setup, and leverage the resulting insights for a data-driven marketing strategy.